What's shipping today

Our QML work lives in the finance_lab workspace: generative models for synthetic financial data, HMM-labelled market regimes as conditioning variables, and an architecture search over tree and quantum models. Each item below is code in the repo today — screenshots are real outputs.

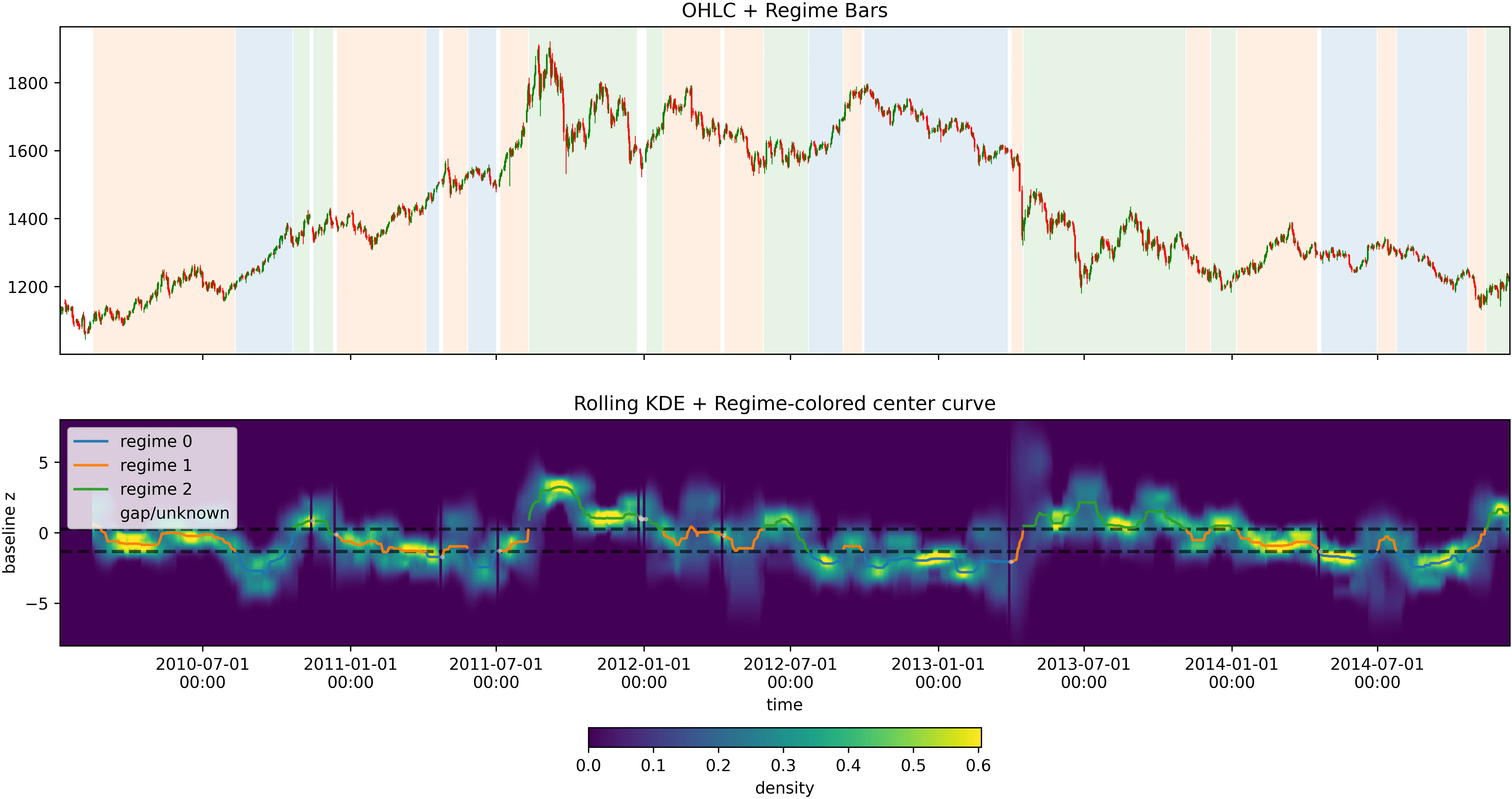

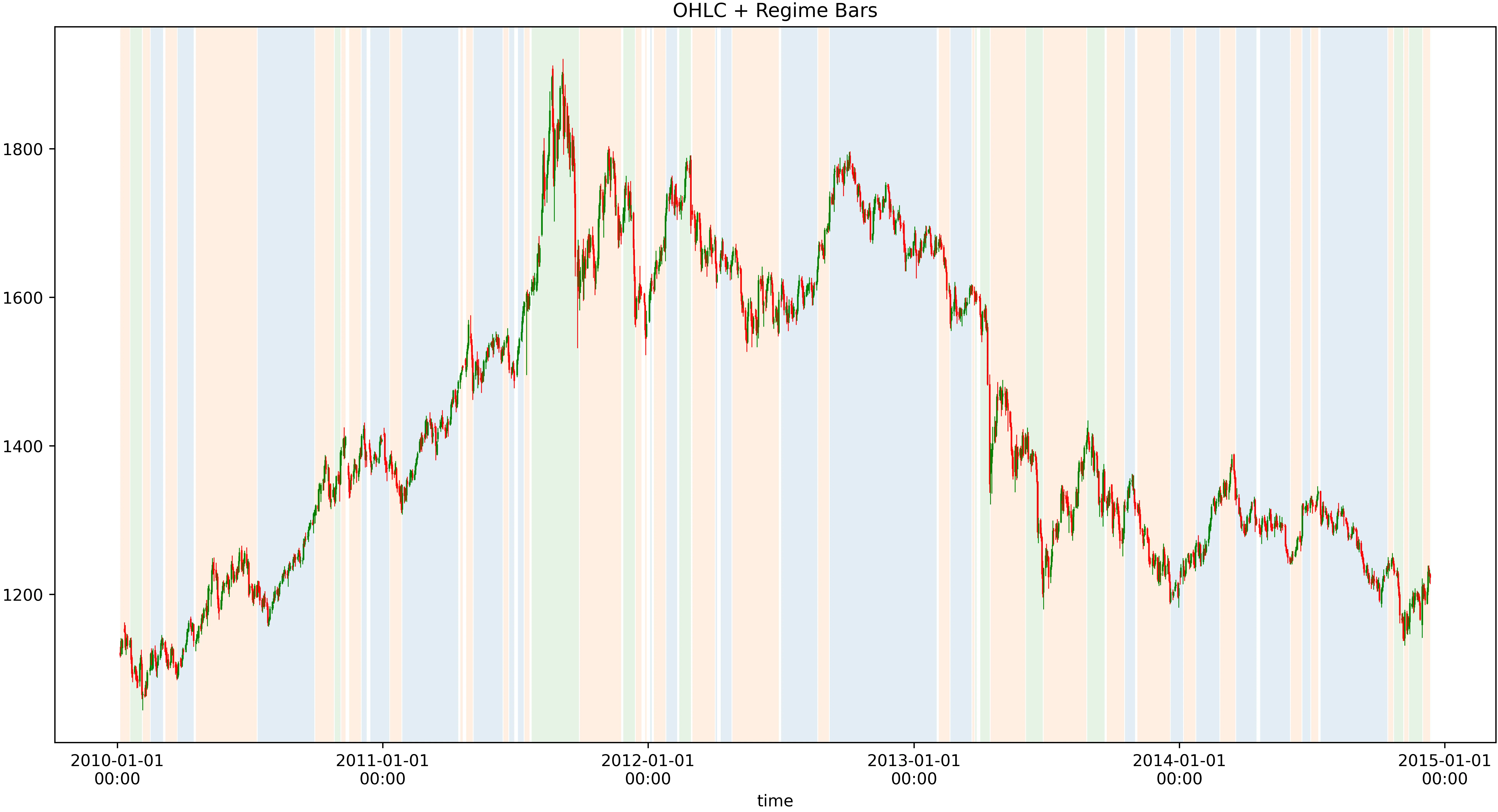

HMM regime labelling

Rolling realized-volatility + skew + cross-correlation fed to a Hidden Markov Model; regimes become conditioning variables for the generative models and classifiers downstream.

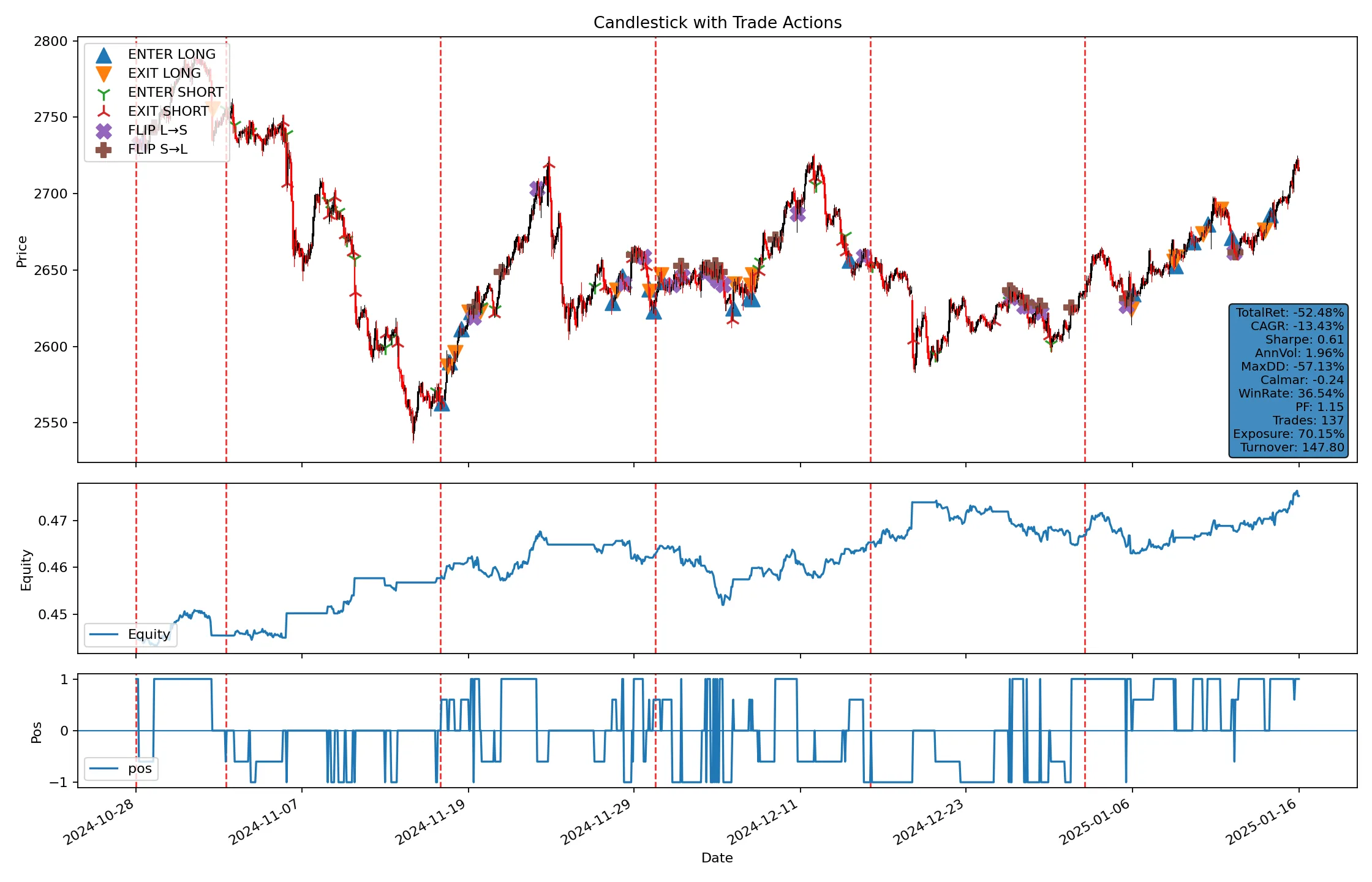

Conditional TimeGAN + QCBM

Conditional TimeGAN with a Quantum Circuit Born Machine noise source for regime-aware synthetic market data; used to stress-test strategies on scenarios that do not appear in historical windows.

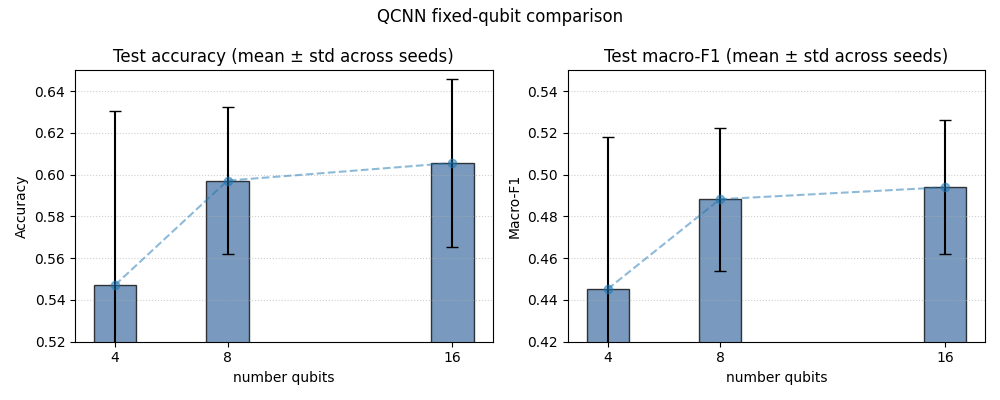

Architecture search

Optuna-driven hyperparameter search across tree, neural, and QCNN models, scored with walk-forward cross-validation. The chart shows QCNN macro-F1 optimisation over trials.

Moving into the Quantum toolkit

The QML building blocks are being factored into the closed-source Quantum toolkit that sits on top of Omega Functions. This is what the toolkit ships today:

- Statevector simulator with mid-circuit projective measurement and reset

- `QuantumLayer` integrating with PyTorch via tch-rs — parameter-shift gradients flow through tch autograd

- Pure-Rust CMA-ES gradient-free optimiser for noisy or non-differentiable loops

- Surrogate trainer implementing arXiv:2505.05249

- QML encoding layers: angle, IQP, hardware-efficient

- circuit! DSL and Aria surface DSL — symbolic parameters + annotations that export to Lean 4 / Rocq

The Quantum toolkit is closed source; the underlying Omega Functions runtime is open source at github.com/Anzaetek/omega-functions-public.

Where QML earns its keep in finance

Synthetic data for stress testing

Regime-aware synthetic time series let you stress-test strategies against scenarios outside the historical window. QCBM captures heavy tails and asymmetric joint distributions that classical GANs average away.

Regime-conditioned signals

HMM regime labels feed classifiers that route trading logic per state. Same infrastructure used for execution, risk, and synthetic-data conditioning.

Sparse-data classification

QML models train on the low-data regimes that finance actually has — hundreds of labeled crash days, not millions. The inductive bias of variational circuits helps when classical deep learning overfits.

Walk-forward validation baked in

Architecture search runs walk-forward cross-validation by default — no data-leakage single-split validation bars that flatter tree models and fall apart in production.